Standard SEO reporting templates fail to capture AI search visibility. Traditional metrics like keyword rankings and organic traffic don't measure whether ChatGPT cites your content or Perplexity references your brand. Purpose-built AI search reporting templates track the unique KPIs that matter for generative engine optimization—citation frequency, AI traffic volume, and mention quality across platforms.

According to Wix AI Search Lab's KPI guide, when measuring SEO for AI search and LLMs, there are significant overlaps with traditional search KPIs, but visibility in generative search tools requires additional metrics. Analyzing how frequently and where your brand appears in AI responses helps gauge your brand's visibility within the LLM ecosystem.

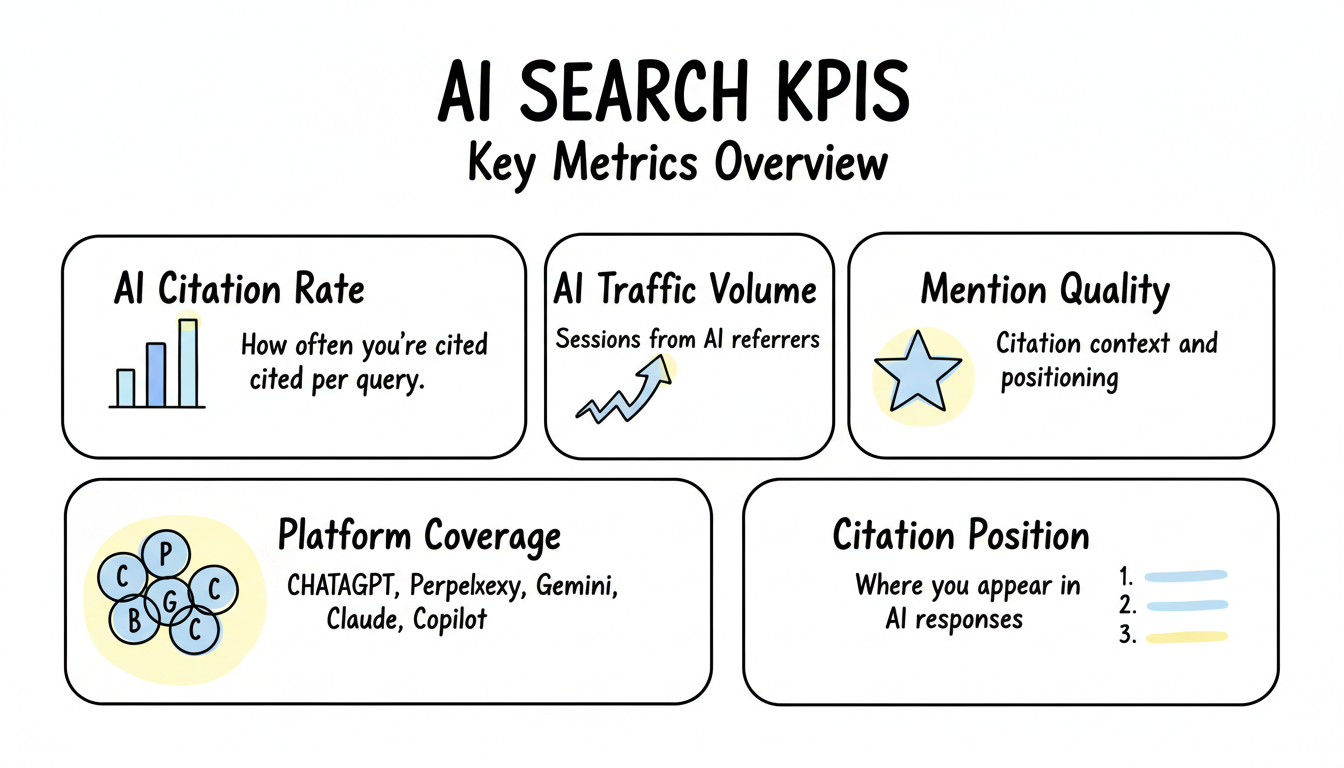

Track metrics that matter for generative search visibility.

According to Keyword.com's AI metrics guide, monitoring AI visibility requires adapting SEO strategies for performance in AI search results. Key metrics include citation frequency, brand mention quality, and platform-specific visibility scores.

Core AI search metrics:

Metric | Description | Why It Matters |

AI citation rate | How often you're cited per query | Primary visibility indicator |

AI traffic volume | Sessions from AI referrers | Direct business impact |

Mention quality | Citation context and positioning | Authority signal |

Platform coverage | Visibility across ChatGPT, Perplexity, etc. | Multi-platform reach |

Citation position | Where you appear in AI responses | Click likelihood |

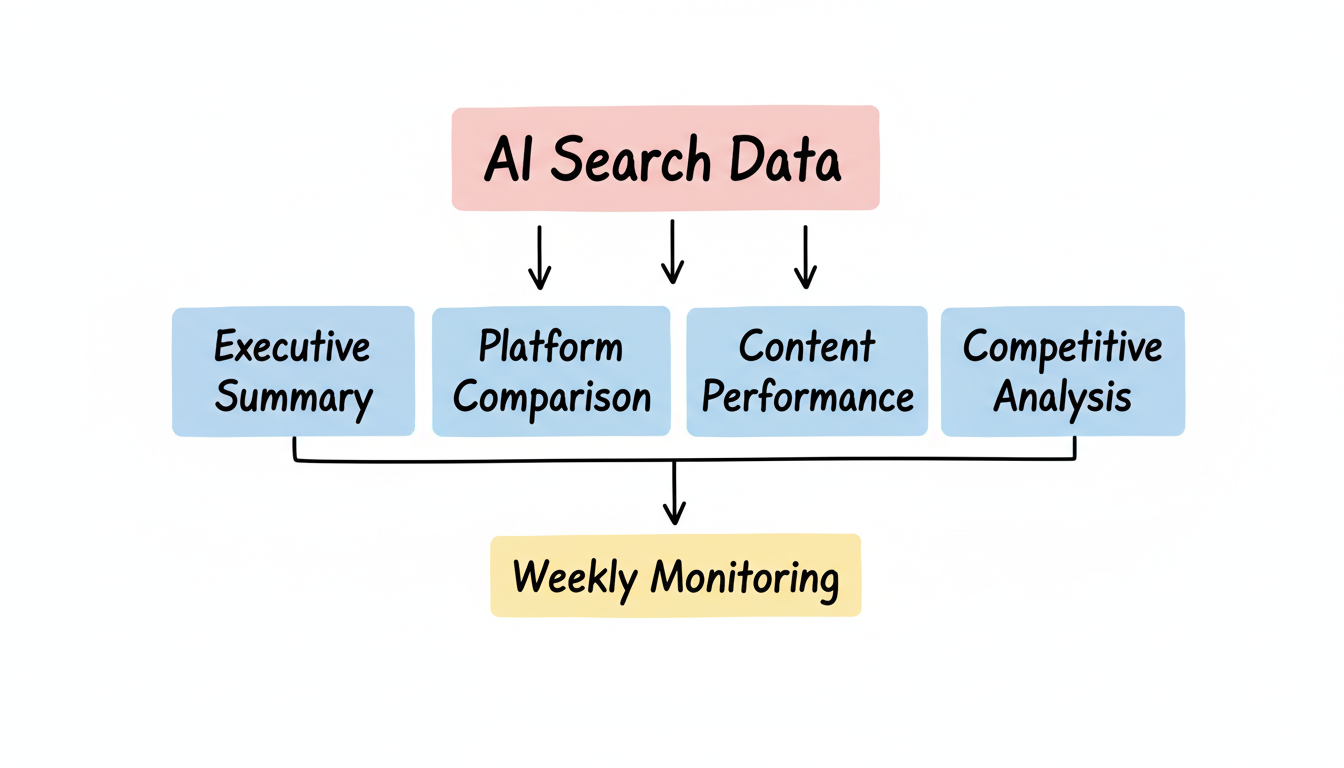

Structure reporting around visibility, traffic, and performance.

According to Single Grain's AI visibility guide, effective AI visibility dashboards require defining the right generative search metrics, designing proper dashboard architecture, instrumenting your data stack, and connecting everything for real-time tracking. A well-structured AEO analytics setup ensures accurate measurement of AI search performance across platforms.

Dashboard architecture:

AI Search Performance Dashboard

├── Section 1: Visibility Overview

│ ├── Total AI citations (30-day trend)

│ ├── Platform breakdown (pie chart)

│ ├── Citation rate vs competitors

│ └── AI Overview appearances

│

├── Section 2: Traffic Analysis

│ ├── AI referral sessions

│ ├── Traffic by AI source

│ ├── Landing page performance

│ └── Conversion from AI traffic

│

├── Section 3: Content Performance

│ ├── Most-cited pages

│ ├── Topics driving citations

│ ├── Content format analysis

│ └── Citation context quality

│

└── Section 4: Competitive Intel

├── Competitor citation frequency

├── Share of voice comparison

├── Gap analysis

└── Opportunity identificationHigh-level AI visibility for leadership audiences.

Executive report structure:

Section | Contents | Visualization |

Performance snapshot | Key metrics vs last period | Scorecard |

AI traffic trend | 90-day AI referral growth | Line chart |

Top achievements | Notable citations/mentions | Bullet list |

Platform breakdown | ChatGPT vs Perplexity vs Gemini | Pie chart |

Recommendations | Next optimization priorities | Action items |

Executive KPIs to include:

Detailed breakdown by AI platform for tactical optimization.

According to SiteGuru's AI visibility report, effective AI traffic breakdowns show how much traffic comes from each AI assistant, including ChatGPT, Perplexity, Claude, Gemini, and Microsoft Copilot. This platform-level detail enables targeted optimization. Organizations implementing an AEO strategy framework should track performance across all major AI platforms to identify optimization opportunities.

Platform comparison template:

Platform Performance Report

├── ChatGPT / SearchGPT

│ ├── Citation count

│ ├── Referral traffic

│ ├── Top cited pages

│ └── Citation context samples

│

├── Perplexity

│ ├── Citation count

│ ├── Referral traffic

│ ├── Top cited pages

│ └── Citation context samples

│

├── Google AI Overviews

│ ├── AIO appearances

│ ├── Position in AI summaries

│ ├── Query triggers

│ └── Click-through impact

│

├── Claude

│ ├── Citation count

│ ├── Referral traffic

│ ├── Content types cited

│ └── Citation context samples

│

└── Microsoft Copilot

├── Citation count

├── Referral traffic

├── Bing integration signals

└── Citation context samplesIdentify which content drives AI visibility.

Content analysis metrics:

Metric | Data Source | Insight |

Pages cited | AI monitoring tools | What content performs |

Citation frequency | Platform-specific tracking | How often cited |

Referral traffic | GA4 AI channel | Business impact |

Topics covered | Content tagging | Subject patterns |

Content format | Page categorization | Format effectiveness |

Content report sections:

Benchmark AI visibility against competitors.

According to Semrush's AI Traffic Dashboard, the AI Traffic dashboard reveals how competitors gain traffic from AI-powered assistants, helping identify domains being recommended by popular LLMs and uncovering emerging traffic sources. When deciding between in-house vs agency AI search resources, competitive benchmarking data proves critical for determining the expertise level required.

Competitive report structure:

Analysis Area | Metrics | Purpose |

Share of voice | % of citations vs competitors | Market position |

Citation gaps | Topics competitors own | Opportunity areas |

Platform strength | Where competitors dominate | Tactical focus |

Content gaps | Missing content types | Creation priorities |

Trend comparison | Growth rates vs competitors | Momentum assessment |

Operational tracking for ongoing optimization.

Weekly report checklist:

Weekly AI Search Monitoring Report

├── Traffic Summary

│ ├── This week AI sessions

│ ├── Week-over-week change

│ └── Conversion count

│

├── New Citations

│ ├── New pages cited

│ ├── New platforms citing you

│ └── Notable mentions

│

├── Alert Items

│ ├── Traffic anomalies

│ ├── Lost citations

│ └── Competitor movements

│

├── Action Items

│ ├── Content to optimize

│ ├── Technical fixes needed

│ └── Testing opportunities

│

└── Next Week Priorities

├── Optimization targets

├── Content creation needs

└── Monitoring focus areasAutomate data collection and visualization.

According to Overthink Group's AI visibility tools review, AI visibility tools save time by providing out-of-the-box metrics and dashboards, enabling tagging architecture for content classification, and automating the tracking process.

Reporting tool categories:

Tool Type | Purpose | Examples |

AI monitoring | Track citations/mentions | Omnius, SE Ranking |

Analytics | Traffic measurement | GA4, Looker Studio |

Dashboarding | Visualization | Whatagraph, Klipfolio |

Competitive intel | Competitor tracking | Semrush, Ahrefs |

Streamline recurring reporting workflows.

According to Niko Pajkovic's AI traffic guide, building a Looker Studio dashboard helps track referral traffic from AI tools like ChatGPT and Perplexity with automated data refresh. Enterprises evaluating enterprise AEO services should prioritize providers offering automated reporting capabilities.

Automation recommendations:

AI search performance reporting requires purpose-built templates:

According to Wix AI Search Lab, just as higher SEO rankings generally lead to more traffic, more frequent AI mentions are likely to drive more clicks and potentially more searches of your brand via LLMs. By embracing the right tools and reporting templates, search marketers can effectively monitor and enhance their LLM visibility with data-driven decision making.

By submitting this form, you agree to our Privacy Policy and Terms & Conditions.